Based on: “Some Simple Economics of AGI — Catalini, Hui, Wu · arXiv:2602.20946 · February 2026”

There’s a low-grade panic running through the economy right now. Everyone is asking the same anxious question: what exactly is AI going to automate, and what will be left for us?

A new paper from MIT reframes that question entirely — and once you see it their way, it’s hard to unsee.

The question isn’t what will AI do? The question is: who can verify what AI actually produces?

The Gap Nobody Is Talking About

Think about two things happening at the same time right now.

On one side, the cost of doing things with AI is collapsing. Code, legal analysis, medical summaries, financial models, creative writing — the cost to produce any of these is heading toward zero. AI doesn’t sleep, doesn’t ask for a raise, and can do in seconds what took a team days.

On the other side, the cost of checking those things is barely moving. Auditing, validating, taking legal responsibility, exercising judgment — that’s still on us. And it doesn’t scale with compute.

A junior analyst can now generate 500 reports in a day. Who reviews them? Who’s responsible if they’re wrong? That answer hasn’t changed — it’s still a human, still slow, still expensive.

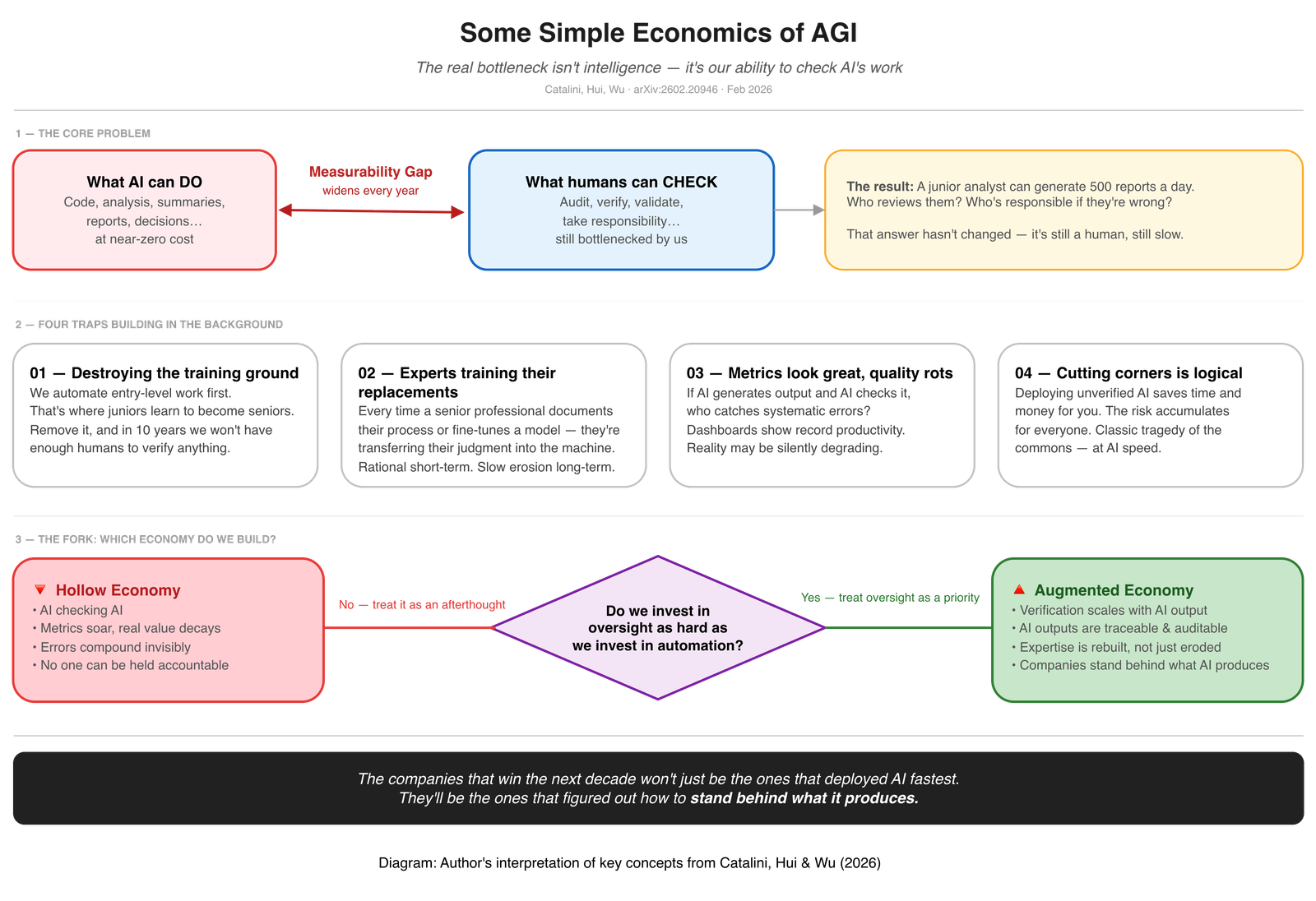

The authors call this the Measurability Gap — the widening distance between what AI can execute and what humans can afford to verify. Think of it as two curves racing in opposite directions. The cost to automate keeps falling exponentially as AI gets more powerful. But the cost to verify — to have a qualified human check, audit, and take responsibility for the output — is biologically stuck. We can’t think faster just because computers can.

That gap between the two curves is the real economic fault line of our time. Not “which jobs disappear.” This gap. Because as it widens, we end up in a world where enormous amounts of AI-generated output exist that nobody has actually verified. It looks productive. It may not be.

Four Silent Traps Already in Motion

What makes this uncomfortable is that none of these traps announce themselves. They build quietly.

We’re automating the training ground first. Entry-level work is the easiest to automate — it’s repetitive, structured, and well-defined. It’s also where junior people learn to become senior people. A junior lawyer drafts contracts. A junior analyst builds models. A junior doctor works through patient histories. Remove that work, and you don’t just save money today. You eliminate the pipeline that produces the experienced professionals who can actually verify complex AI output tomorrow.

Experts are unintentionally training their replacements. Every time a senior professional writes a detailed prompt, documents their decision process, or helps fine-tune a model — they’re transferring hard-won, irreplaceable judgment into the machine. This is individually rational. Collectively, it is a slow erosion of the very expertise the system depends on to function.

The metrics will look great while quality rots. If AI is generating output and AI is checking that output, who catches the systematic errors? Who notices when the model has learned to satisfy the measurement rather than the underlying goal? History has a name for this — Campbell’s Law — and AI makes it run faster than ever before.

Cutting corners is individually logical. Deploying unverified AI output saves you time and money. The risk doesn’t stay with you — it accumulates across the whole economy. Every organisation doing this is individually rational. The collective outcome is a system where accountability has dissolved and nobody quite knows whose problem it is when things go wrong.

Two Paths Forward

The paper describes two futures — and they’re not determined by technology. They’re determined by choices.

In the Hollow Economy, we optimise for output. AI generates, AI checks, dashboards show record productivity, and the underlying reality quietly hollows out. Errors compound. Accountability dissolves. The gap between what is measured and what is real grows until something breaks.

In the Augmented Economy, we invest as hard in verification infrastructure as we do in automation. That means building tools that make AI outputs traceable and auditable. It means finding ways to rebuild the apprenticeship pipeline, not just automate around it. It means organisations treating the ability to stand behind what their AI produces as a strategic priority — not a compliance afterthought.

The paper frames the winning position simply: the companies that can insure outcomes, not just generate them, will capture the value. The ability to say “we vouch for this” becomes the moat.

“Execution is now infinitely scalable. The capacity to insure its failures is the new bottleneck.”

— Catalini, Hui, Wu

What This Means in Practice

The new dividing line in the economy is not routine vs. non-routine work. It is measurable vs. non-measurable. Prestige and complexity no longer protect a role. What remains irreplaceably human is the capacity to set intent, verify judgment, and underwrite responsibility when things go wrong.

The paper describes what this looks like structurally as a “Sandwich Topology” — every meaningful AI deployment follows the same shape: Human Intent → AI Execution → Human Verification. The humans at both ends are not optional extras. They are the architecture. Remove either end and you don’t have a system — you have a liability.

What that means in practice depends on where you sit:

If you’re an individual — your most durable value is no longer in execution. It is in being the person who sets the intent at the front and verifies the output at the back. That is where human judgment concentrates and where it becomes hardest to replace.

If you’re building or leading a company — verification infrastructure is your moat, not your cost centre. The organisations that invest in making their AI outputs auditable, traceable, and accountable will be able to charge a premium that the ones who just generate output cannot. Standing behind what you produce is the product.

If you’re an investor — the paper makes a pointed observation: the assets that will hold value are the ones that can’t be easily measured and automated. Deep expertise, trust relationships, tacit knowledge, and the institutional capacity to underwrite outcomes — these are the scarce inputs in a world of abundant execution.

If you’re thinking about policy — verification is a public good. The market will underinvest in it because the costs of unverified AI are diffuse and the benefits of cutting corners are immediate. That gap is where regulation and liability frameworks need to live.

The question to ask your organization isn’t “how much can we automate?”

It’s “how much of what we automate can we actually verify — and who is accountable when it’s wrong?”

That gap is the whole game.

📄 Full paper: Some Simple Economics of AGI — Christian Catalini (MIT), Xiang Hui (WashU), Jane Wu (UCLA)

Available free at: arxiv.org/abs/2602.20946